I didn't grow up with chalkboards. By the time I hit school, whiteboards were the standard and a computer lab was just down the hall. That was how it worked.

And when I look at a lot of the EdTech platforms being built today - especially in higher education and across educational technology and online learning - I realize we haven't moved as far from that world as we think. We put everything online. We made the paperwork digital. The underlying feedback loop for a lot of students, though, is still running on the same slow cadence I experienced in school - just with a better web interface on top.

Think about who's actually on the other side of that loop. In most cases there's a parent who made a real investment in their child's future - someone paying for an outcome, not a subscription. And there's a student who, honestly, would often rather be doing something else. Getting a student to engage seriously with learning material is hard enough. Getting them to stay engaged semester after semester, without seeing evidence that the platform actually knows them and is working for them specifically - that's much harder.

That gap between what's promised and what's delivered is what I want to get into here. Because the tools changed, but the tempo didn't. And the platforms that are actually closing that gap are doing something different under the hood.

Going Digital and Getting Faster Turned Out to Be Two Different Things

When learning management systems and other learning systems started rolling out in earnest, it was a real step forward. I saw it firsthand at Edmonds Community College. Syllabi moved online. Assignments went through Canvas and similar platforms. Schools started collecting more data than ever before. That was genuinely useful progress.

But here's the thing about all that data: the insight still lagged. Teachers were reviewing test results at the end of the week, or the end of term. Dashboards were built around reporting cycles, not around what was happening during a lesson. The feedback loop moved from a stack of papers on someone's desk to a dashboard that refreshed weekly - but the rhythm didn't change.

Digital and responsive turned out to be two very different things, and student expectations started outpacing the architecture before most platforms noticed.

Two Students, One Platform That Adapts and One That Doesn't

Say two students - Jane and Jill - both start a higher education introduction to business analytics course at the same time. Same goal: understand the material, earn a solid grade, set themselves up for the next semester. Their experiences couldn’t have been more different.

Jill's class runs the way most courses do. Weekly quizzes, a larger assessment at the end of every month, and standard homework. Feedback comes through office hours, the occasional email from the professor, maybe a group study session. It's a familiar university setup. Jill is learning the content and doing reasonably well - but she's running most of the race with blinders on. She doesn't have a clear picture of where she actually stands until a grade shows up, usually after the window to do anything about it has already closed.

What Jill's learning experience actually feels like

|

Jane's experience is different. Her instructor acts more as an advisor, guiding her through a platform that adjusts based on how she's doing. When Jane takes a quiz, the questions shift based on what she's getting right and wrong in that session. How long is she taking on each question? Is her score trending? What concept makes sense to serve her next - for her specifically, based on what she's showing right now - rather than whatever comes next on the syllabus for the whole cohort?

Jane can see where her gaps are while she still has time to close them. The platform builds her a picture of her own learning as it happens. She isn't waiting for a grade to tell her how she's doing - and over a full semester, that compounds. A student who gets corrective feedback during a session makes different decisions about where to spend their study hours than one who finds out on Friday that Monday's concept didn't land.

That difference in outcome is also the difference between a platform that delivers on its promise to the parent paying for it, and one that's technically providing education but not really closing the loop.

The Research Is Clear - and Has Been for a While

RAND's study for the Bill and Melinda Gates Foundation tracked student achievement across 62 schools over two years and found significant positive effects on maths and reading performance for students in personalized learning environments compared to matched groups at conventional schools. That series of reports ran from 2014 through 2017 and remains one of the most rigorous bodies of work on this question.

A 2024 scoping review of 69 studies in PMC found that 59% reported improved academic performance among students using personalized adaptive learning over traditional instruction. The consistent thread across the research: learning loops need to be short. The moment a concept clicks, the window to reinforce it is brief. The moment frustration starts tipping into disengagement, the window to intervene is even shorter.

A system that finds out a student was confused on Wednesday when the weekly report runs on Sunday isn't doing useful work. It's describing what already happened.

62 schools | tracked in the RAND / Gates Foundation study - students in personalised learning environments made significant gains in maths and reading vs a matched comparison group at conventional schools |

What Actually Has to Be True for Jane's Experience to Work

The Jane experience isn't about a better quiz interface. It's about what's running underneath it. For a platform to adjust content and pacing within the same session and not overnight,) it needs to ingest student interactions as they happen, process them fast enough to act on, and return a result before the student has moved on to the next question.

Most platforms built on batch-era infrastructure weren't designed to do that. They run scheduled jobs: data collected during the day, processed overnight, surfaced in a dashboard the next morning. That's a sensible architecture for institutional reporting. It's the wrong rhythm for a learning loop.

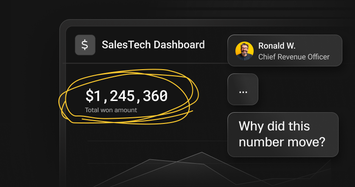

This is where it's worth naming something that comes up repeatedly when I talk to EdTech teams about their platforms. The symptoms tend to show up at the student and teacher level long before anyone has described them as an infrastructure problem.

Signs the feedback loop isn't closing fast enough - as students and teachers experience it

|

These aren't edge cases. They're what happens consistently when the data layer and the experience layer are out of sync. And because they show up as student frustration or slow progress rather than system errors, they often go undiagnosed for a long time.

Scale Makes This Harder to Ignore

It's easy to imagine a real-time feedback loop working for one classroom. Modern EdTech doesn't operate at that scale. Platforms are serving entire districts, national assessment programs, and global corporate training environments. When a statewide assessment window opens or a new feature rolls out to all students simultaneously, you're not processing 30 learners - you're processing hundreds of thousands, each generating signals that need to feed back into their individual experience.

At that scale, a platform architecture built for nightly reporting cycles isn't slow in an abstract sense. It's concretely failing students on a specific Tuesday afternoon and not knowing about it until Thursday.

AI Made the Lag Impossible to Ignore

AI tutors, recommendation engines, and learning copilots are now a standard part of EdTech product roadmaps across learning technology, online courses, and broader online learning ecosystems. They've also surfaced a problem that was already there.

These systems assume the data they're reasoning on reflects what's actually happening right now. When the underlying pipeline is batch-based, an AI tutor's guidance can feel off - technically accurate in some sense, but calibrated to the wrong moment in time. A student who was confused in this session but got something right on Tuesday's quiz will get feedback aimed at Tuesday's performance. They don't argue with the AI when it feels out of sync. They just stop engaging with it, which is exactly the opposite of what the platform is trying to achieve.

AI didn't create this problem. It just made the cost of it visible in a way that's hard to dismiss when a student is staring at a response that doesn't track with where they actually are.

What Closing the Gap Looks Like

Some platforms have already made this shift, and the outcomes are measurable rather than theoretical.

Two platforms that moved from batch reporting to real-time learning infrastructure

|

In both cases, the product-level change came after an architecture-level decision. The question they answered first was whether the platform could act on a student signal in the moment it arrived.

The Pace of Learning Has Outgrown the Infrastructure We Built For It

Going back to Jane and Jill: Jane ends the semester not just with a better grade, but with real awareness of how she learns - which concepts came easily, which ones she had to work at, and what that means for the classes ahead. She has confidence she actually earned. Jill put in similar effort and came out with decent marks, but she doesn't have that same clarity. She's heading into the next course without fully understanding where she landed or why.

That difference matters to the student. It matters just as much to the parent who made the investment and is trying to work out whether it was worth it.

For EdTech teams, the honest question is: where in your platform is a student waiting for feedback that the system already has the data to give them right now? That's usually where the gap between digital and responsive is hiding.

Read part 2: 4 AI Use Cases Exposing Your Learning Platform's Data Gap

.png?width=24&disable=upscale&auto=webp)