I’ve spent the last few years of my career watching organizations get excited about AI, only to discover that the real bottleneck isn’t the model, but the headwinds around synthesizing data from systems that were never built for real-time decisions. In government, that gap is even starker: successful AI adoption hinges on whether operational artificial intelligence can safely act on consistent, live, governed data within clear AI governance and responsible AI frameworks..

Civilian agencies carry an extra constraint the private sector often underestimates: public trust and oversight, which are foundational requirements for responsible AI in public agencies. It’s not enough for an AI system to be clever; when decisions affect public benefits, compliance, or citizen services, agencies need to show that those decisions are grounded in current, explainable information.

When I talk with government technology leaders across the public sector, nobody is asking “Should we invest in AI?” anymore. AI is already embedded in core government operations like benefits adjudication, FOIA processing, licensing, inspections, and citizen contact centers—the places where frontline staff are already stretched thin and small efficiency gains translate directly into better service and less burnout.

The hard part is the environment AI lands in. Most civilian agencies don’t get to start fresh. They’re operating decades-old systems of record that can’t be turned off, navigating strict privacy and data-sharing rules, and working within budget cycles that reward incremental upgrades instead of sweeping reinventions. On top of that, every major decision can be revisited by citizens, auditors, inspectors general, and oversight bodies who expect a clear story about how outcomes were reached.

GAO reviews of legacy IT reinforce what I’ve seen in large enterprises: a small percentage of systems fully modernized, with a long tail of critical applications still in production and only partially documented for replacement. That isn’t just “tech debt”; it’s structural risk that drives up maintenance costs.

Despite those constraints, AI technology is already changing how government work gets done. Agencies are using it to summarize case files, classify documents, draft correspondence, and mine historical data for patterns in workloads and outcomes. For the people doing the work, AI is not abstract: it’s the difference between going home overworked and exhausted, and going home on time.

The friction shows up when AI is expected to reflect what happened today, not last week. Many systems still depend on batch pipelines and snapshots: nightly warehouse loads, scheduled refreshes, and offline processes that are fine for dashboards but brittle for decisions in the flow of work.

You see the gap in very human ways:

A caseworker opens an AI summary that doesn’t reflect yesterday’s updates in the system of record.

A reminder or recommendation triggers based on status that changed hours ago.

A citizen calls, confused, because the “system” contradicts what they already fixed.

Under the hood, legacy architectures make this hard to fix. Batch ETL introduces latency and fragility. Analytical jobs compete with operational workloads for the same resources. The same datasets are copied into multiple tools “just to be safe,” only to leave a data governance nightmare. Lineage lives in a handful of experts’ heads instead of being enforced by design.

Then there’s the PII problem. To support analytics and reporting, sensitive data is copied out of systems of record into secondary platforms again and again. Each copy is another access pattern, another audit trail, another potential incident. Over time, you end up with multiple “sources of truth” and a governance story nobody can explain in one sitting.

From an AI perspective, this is where trust breaks in the public sector. A copilot grounding its suggestions on partial, delayed views of reality can be completely consistent with its inputs and still misaligned with live operations. In benefits, compliance, or citizen-facing workflows, that’s how you end up with appeals, complaints, and hard questions from oversight bodies.

When agencies respond by keeping AI “advisory only,” adding manual checks, or limiting scope, it usually isn’t because they’re skeptical about AI in general. They simply don’t trust the data path enough to let AI sit in the critical path.

In almost every conversation about AI in government, I come back to the same point: “faster” is not the primary goal. The goal is responsible AI adoption that operates on current, authoritative data inside guardrails everyone understands. That depends on a few things working together:

Freshness: Data reflects the present, not a batch window.

Availability: Systems hold up under real workloads, not just in test environments.

Predictability: Performance and access patterns are stable, not surprising.

Governance: Controls live at the data layer, not as an afterthought in a slide deck.

Minimal Duplication: One source of truth—fewer copies, less risk.

Traditional data stacks, tuned for retrospective analytics, weren’t designed to satisfy all of this at once. The result is a patchwork of tools and controls wrapped around fragmentation—a setup that’s expensive, fragile, and still leaves gaps where AI and humans see different versions of the truth.

This is the problem space where I focus my work. SingleStore isn’t trying to be the model, the chatbot, or the policy framework. It’s a real-time data infrastructure layer aimed directly at the part of the stack that ultimately dictates whether artificial intelligence can be trusted in production by public agencies.

The idea is straightforward:

Use a simple highly functional (HTAP) architecture so transactional and analytical workloads share the same data rather than competing across disconnected copies.

Support real-time ingestion and low-latency queries so AI can see the same state humans are seeing when decisions are made.

Provide one system for SQL and vector search so retrieval-augmented generation can run directly on operational data instead of exported snapshots.

Offer cloud, on-premises, and hybrid deployment so architecture can follow mission and policy constraints, not dictate them.

In other words, fix the data path first, then let models plug into something that can actually sustain live, governed AI.

In highly regulated, high-stakes environments like cybersecurity and financial services, the bar for real-time, trustworthy data infrastructure is already extremely high. The same architectural patterns that succeed there—continuous ingestion at scale, low-latency analytics, strict access controls, and audit-ready lineage—map closely to what civilian agencies need for AI-assisted decision-making and continuous monitoring.

In cybersecurity, Armis uses SingleStore to ingest over 100 billion events per day, load roughly a million rows of complex data per second, and run queries in about 1.5 seconds over days’ worth of data. That’s what it looks like when you need real-time visibility, strict security, and an audit-ready story on top of massive, continuously changing data.

In financial services, a Fortune 25 institution chose SingleStore on AWS as a foundational data platform for transactions, analytics, and vector workloads, supporting petabyte-scale data, tens of thousands of concurrent users, and millisecond-level query times for high-net-worth customer portals and trading systems. Here, low latency isn’t a nice-to-have; it’s directly tied to risk, compliance, and customer experience.

If a unified, real-time data layer can sustain those environments, it’s a strong foundation for civilian missions that look a lot like continuous monitoring and AI-assisted decision support—just under a different set of regulations and acronyms.

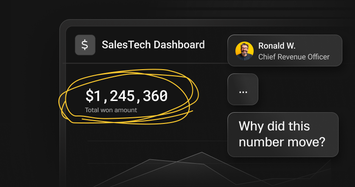

To make this concrete, I like to think about one person: the caseworker staring at a spike in benefits applications. They’re not thinking about physical data layout or vector search. They’re asking, “Can I trust what this system is telling me, and will it actually help me get through this work?”

In one version of the story, the agency deploys a generative AI copilot on top of batch-fed data. The pilot looks great. In production, the copilot is completely out of sync with what staff see in the system of record. “Incomplete” applications have already been fixed. Eligibility logic lags behind policy updates. After a week, the pattern is obvious: you have to double-check almost everything. The copilot becomes another tab to manage, not a partner. Trust drops, supervisors add review layers, and the AI project is technically “live” but not truly helping.

Now replay the same mission with a real-time data platform underneath. Transactional updates stream in as they happen. Policy changes are reflected quickly. Operational and analytical views stay aligned. When the copilot flags missing documentation or suggests an eligibility determination, the caseworker sees the same thing in the system they already rely on. Over time, the mental model shifts from “verify everything” to “let the copilot handle the heavy lifting and focus my attention where it matters most.”

On the governance side, every recommendation and override is linked to a consistent view of the data at the time. That makes it much easier to sit down with an auditor or oversight body and walk through how decisions were made. The interesting part is that the model might be identical in both scenarios. The difference is the data foundation it’s sitting on.

Putting AI into mission workflows doesn’t require a big-bang rewrite of everything. The agencies making progress tend to follow a pragmatic pattern.

Architecturally, the pattern looks like this:

Systems of record stream into a unified operational and analytical data store instead of scattering data across tools.

SQL and vector retrieval give AI components grounded access to that data instead of periodic exports.

AI runs behind zero-trust controls, with clearly defined interfaces and access policies.

Human review, logging, and records retention are built into workflows from day one.

On the adoption side, the formula that tends to work is as follows:

Start with high-volume, rules-driven workflows where better decisions and shorter cycle times are easy to measure.

Define mission-linked KPIs—cycle time, backlog, error rates—rather than just model-centric metrics.

Align data controls with NIST AI RMF early so governance is a design constraint, not a last-minute hurdle.

Build auditability into the system from the start, so authorization-to-operate is based on concrete evidence instead of promises.

Incremental architectural change tied to mission outcomes is easier to fund, easier to defend, and easier to adapt as policies, leadership, or priorities evolve.

After years at the intersection of data platforms and AI, my view is straightforward: if you want AI to matter in government, you have to treat data infrastructure as a mission system, not a utility.

A fast but stale AI system is just another way to disappoint the people relying on it. A powerful but opaque system is another way to lose trust. But AI grounded in authoritative, current data, inside clear governance boundaries, has a real chance to reduce backlogs, improve service delivery, and strengthen accountability.

That’s why I focus on the data layer. If we get that foundation right—real-time, high-concurrency, governed by design—then AI can move from glossy demos and pilot decks into durable, trustworthy operations. And in the public sector, that’s the only outcome that really counts.

.png?width=24&disable=upscale&auto=webp)